Improvement: With enough time people figured “Attention is all you need” i.e. we can do away with RNN components completely and solely use just the attention mechanism to get better performance. This was the seminal paper that continues to transform the AI landscape.

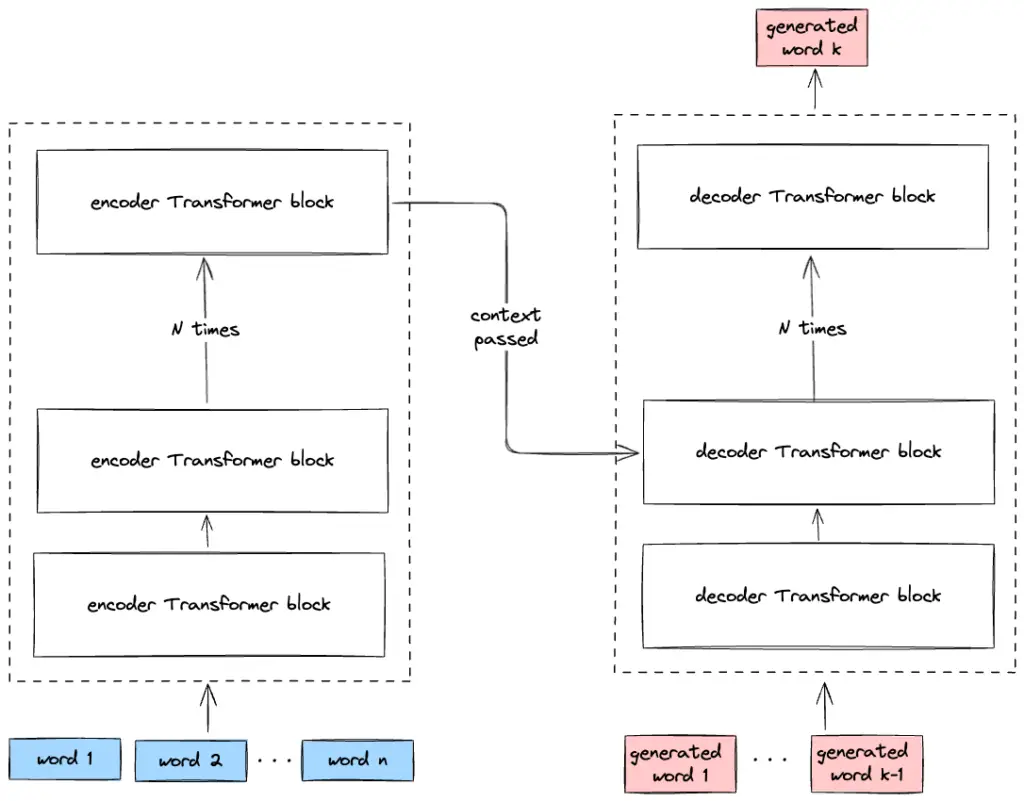

Technical: The paper shows that the BLEU score for translation is better than the best seq2seq models at similar compute and memory requirements. Also to note transformers are highly parallelizable over RNNs as the latter is of sequential nature. You can notice how the original encoder-decoder structure from seq2seq has been retained here. This spawned a lot of architectures of which 2 of them stood the test of time – BERT and GPT.